Sunday, 14th January 2024: Happy to get this post out within the first month of 2024! I started writing this in November 2023 and was hoping to get this out sooner. But then my second daughter 👧 arrived, putting some extra time constraints on how much free time I could find. Now, as I finally getting back to the schedule, I am delighted to bring this post to completion.

This blog post deviates a bit from the usual focus on performance-related aspects, and there's a specific reason for this. I came across the C-Reduce tool only during the last few years, and I am quite impressed by how much it can be helpful, especially during development with the latest and ever-evolving compiler toolchains and programming models in HPC. Had I known about this tool earlier, I'm confident it could have saved me many days of effort and facilitated the creation of better reproducers. So by choosing this topic for a blog post, my primary aim was to understand the C-Reduce better and document instructions for future reference for myself. So let's get started... 🚀!

Motivation

As an application developer or performance engineer, compiler bugs are not our daily concern. However, for some, this scenario might be all too familiar. I have very distinct decade-old memories (since 2014) of fighting with the Cray compiler toolchain while porting a large scientific application to GPUs using the OpenACC programming model. Back then, the PGI compiler toolchain (now NVHPC) was working better, but compiling the same code with the Cray toolchain resulted in compiler segfaults. I found myself toggling between Cray and PGI compilers, manually commenting out code sections one by one to zero in on the problematic OpenACC offload regions. It was a time-consuming process already, and hence there was little motivation to create a standalone test for the compiler support team. As the compiler bug-fix and release cycles are long, we were often looking at how to work around a particular compiler bug by using a different construct or rearranging code.

Fast forward ten years, and that sense of déjà vu still lingers. While compiler toolchains have made significant strides in adapting to the latest standards and language evolutions, the unyielding progress of programming models—tailored to catch-up with contemporary CPU/GPU architectures—consistently pushes the boundaries. While navigating the cutting edge of both software and hardware platforms, such as in the realm of HPC, the persistent challenge of grappling with compiler bugs remains an ever-present reality.

During the last few years my colleagues at the Blue Brain, Olli Lupton and Ioannis Magkanaris, did excellent work and spearheaded the efforts of OpenACC/OpenMP GPU porting with the NVHPC compiler toolchain. We have seen several compiler/runtime issues that have been reported here, here, and here. The support on the NVIDIA forum has been excellent (kudos to Mathew Colgrove!). Olli did an excellent job of providing concise reproducers to pinpoint compiler issues, thanks to C-Reduce! During this work, Olli introduced the C-Reduce tool to us. Initially, I wasn't certain about the tool's effectiveness. However, as I saw example after example, I became thoroughly impressed. Until this summer, Olli managed all our NVHPC challenges. With new issues arising, I thought it would be time to gain a more in-depth understanding of this tool.

So, this post introduces C-Reduce, an efficient tool designed for the automatic reduction of large application codes. While its primary purpose is to assist developers in identifying and reporting bugs in compilers, my practical experience has shown that C-Reduce is a valuable tool for application developers beyond reporting compiler-related bugs. As you explore the examples presented below, I am confident that you will be impressed and discover potential use cases applicable to your own development scenarios. So I encourage you to take a closer look!

C-Reduce In A Nutshell

C-Reduce is a test case reduction framework, initially designed to automate the process of creating concise bug-triggering code for compilers. The tool originated from the efforts of John Regehr and his group at the University of Utah. The C-Reduce takes large application code as input and transforms it into a more compact version that still exhibits the same code behavior. Note that we refer to "code behavior" rather than explicitly labeling it as a "bug". This is because the tool remains neutral regarding whether it's an actual compiler bug or something else. In subsequent sections, we will see what we meant by "code behavior".

The core idea behind the C-Reduce is the Delta debugging. The delta debugging algorithm systematically narrows down the input code triggering a bug so that developers can locate and fix the issue with less effort. Andreas Zeller's 1999 paper Yesterday, my program worked. Today, it does not. Why? gives a good background about the delta debugging technique and motivating examples of its origin. In short, the delta debugging algorithm starts with an input and incrementally reduces it by removing or simplifying parts of it. After each reduction, it re-runs the program or "interestingness test" to check if the failure still occurs. If the failure persists, it continues the reduction process iteratively until the smallest possible input that still triggers the bug is identified. It's not necessary to know about Delta debugging to use C-Reduce but it's interesting to see how the technique originated and how mature & useful it has become over the years. If you are curious about the original tool, you can find the source in the GitHub repository: github.com/dsw/delta.

Despite the name "C-Reduce" implying a focus on the C programming language, the core framework is versatile and not confined to a specific domain or language. While it incorporates a set of passes tailored for C/C++ codes, enhancing its effectiveness for applications in these languages, the framework can be used with other languages too, such as Java, Rust, Julia, Haskell, and Python. We will see a few examples of this in the subsequent sections. For a comprehensive overview and deeper insights into the tool, I recommend the PLDI'2012 paper, Test-case reduction for C compiler bugs, as an informative read. The preprint is directly accessible here. Additional resources, including blog posts, I have linked them in the References section.

Installation

For the sake of completeness, let's quickly see how one can get the tool installed.

System Package Managers

C-Reduce is readily available as a binary package on major operating system distributions, including Ubuntu, Fedora, FreeBSD, OpenBSD, and through MacPorts/Homebrew package managers on MacOS:

|

1 2 3 4 5 6 7 |

# on Ubuntu apt-get install -y creduce # on MacOS brew install creduce |

Spack

When working with external systems, such as HPC clusters, it's often the case that we work with proprietary vendor compilers. In such cases, it's difficult to reproduce issues on a local desktop or laptop. In such situations, having C-Reduce readily available on the same system is very handy. In the HPC domain, Spack is the most commonly used package manager. A few years ago, my colleague contributed the C-Reduce spack package. I made some minor fixes in C-Reduce to address build issues and fix compatibility with the latest LLVM releases. With the latest version of Spack, the installation process is straightforward:

|

1 2 3 |

spack install creduce |

It's worth mentioning that installing LLVM from a source is quite time-consuming. If you already have LLVM available, I would recommend to add it as an external package in Spack.

Building From Source

Building C-Reduce from source is straightforward if you already have LLVM installed:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

# On Linux distributions like Ubuntu, install LLVM if not already available wget https://apt.llvm.org/llvm.sh && chmod +x llvm.sh && sudo ./llvm.sh 16 all # On MacOS brew install llvm # Clone C-Reduce repo git clone https://github.com/csmith-project/creduce.git mkdir creduce/build && cd creduce/build # On Linux, replace <code>/usr/lib/llvm-16/</code> with a directory where LLVM is installed cmake .. -DLLVM_DIR=/usr/lib/llvm-16/lib/cmake/llvm -DCMAKE_INSTALL_PREFIX=$HOME/install/creduce # Or, on MacOS cmake .. -DCMAKE_INSTALL_PREFIX=$HOME/install/creduce/ -DLLVM_DIR=/usr/local/opt/llvm/lib/cmake/llvm/ -DClang_DIR=/usr/local/opt/llvm/lib/cmake/clang/ # build and install make -j install export PATH=$HOME/install/creduce/bin:$PATH |

With this, we will have access to the creduce command. If that's the case, we are ready to get started!

Basic Usage

Let's take a look at the basic usage of C-Reduce. Of course, such examples are already covered in other tutorials and blog posts, I wanted to have this here for completeness.

A Sample Code: Hello World

Here is a simple example that prints a "Hello World!" message and the sum of two arrays to a stdout. Nothing interesting here!

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 |

#include <iostream> #include <array> #include <algorithm> #include <iterator> std::array<int, 3> add_arrays(const std::array<int, 3>& arr1, const std::array<int, 3>& arr2) { std::array<int, 3> result; std::transform(arr1.begin(), arr1.end(), arr2.begin(), result.begin(), std::plus<>()); return result; } void print_array(const std::array<int, 3>& arr) { std::cout << "Result: "; std::copy(arr.begin(), arr.end(), std::ostream_iterator<int>(std::cout, " ")); std::cout << std::endl; } void say_hello() { std::cout << "Hello World!" << std::endl; } int main() { say_hello(); constexpr std::array<int, 3> vector1 = {1, 2, 3}; constexpr std::array<int, 3> vector2 = {4, 5, 6}; # intentional error for demo #ifdef WITH_COMPILE_ERROR vector1[0] = 7; #endif std::array<int, 3> result = add_arrays(vector1, vector2); print_array(result); return 0; } |

Compilation Issue And Desired Reproducer

With the above example, let's first explore a type of error that is easy to identify: compiler bugs resulting in compilation errors or crashes. In the above example, we intentionally introduced a compilation error within the #ifdef WITH_COMPILE_ERROR #endif block. If we compile the code with -DWITH_COMPILE_ERROR, the output is:

|

1 2 3 4 5 6 7 8 9 10 |

$ clang++ -std=c++17 hello.cpp -DWITH_COMPILE_ERROR hello.cpp:29:16: error: cannot assign to return value because function 'operator[]' returns a const value vector1[0] = 7; ~~~~~~~~~~ ^ /Library/Developer/CommandLineTools/SDKs/MacOSX.sdk/usr/include/c++/v1/array:204:5: note: function 'operator[]' which returns const-qualified type 'std::__1::array<int, 3>::const_reference' (aka 'const int &') declared here const_reference operator[](size_type __n) const _NOEXCEPT { ^~~~~~~~~~~~~~~ 1 error generated. |

Assume this compilation error is not desired and C++ allows us to modify the constexpr variables. In this case, if we want to create a simple, minimal reproducer from the above example then one could come up with the following code:

|

1 2 3 4 5 6 7 8 9 |

#include <array> int main() { constexpr std::array vector1 = {1, 2, 3}; #ifdef WITH_COMPILE_ERROR vector1[0] = 7; #endif } |

In this example of ~40 lines of the original code, identifying such an issue and creating a reproducer like the one above is not challenging. However, in production applications with source files containing thousands of lines of code, this could become a time-consuming task. Assuming this, let's see how we can utilize C-Reduce to automate this process.

C-Reduce In Action : Compilation Errors

A basic syntax of using C-Reduce is the following:

|

1 2 3 |

creduce interestingness.sh program.cpp |

where

program.cppis an input source code that we want to minimize/reduce through the reduction processinterestingness.shis a script that defines the "interestingness test"

The interestingness test plays a central role in C-Reduce's usage, acting as a driver in the reduction process of input code. Typically it consists of a series of commands that determine the direction of the reduction process. With each modification C-Reduce makes to the program, it triggers the execution of this script. The decision-making process relies on the exit code produced by the script: an exit code of 0 indicates that the reduction meets specified conditions, making the reduced program a suitable candidate for further iterations. Conversely, a non-zero exit code denotes failure, signaling that the reduced program requires additional modifications. This exchange of information through exit codes establishes the language of interaction between C-Reduce and the evaluation script, guiding the reduction process.

Let's write our first interestingness test and then it will become clear what we mean by the above. Here is the example that I came up with:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

#!/bin/bash # step 1: make sure #ifdef is retained grep -q "#ifdef WITH_COMPILE_ERROR" hello.cpp || exit 1 # step 2: make sure code is still valid C++ code clang++ -c -std=c++17 hello.cpp || exit 1 # step 3: make sure the code fails only with -DWITH_COMPILE_ERROR and has the expected compilation error compile_output=$(clang++ -c -std=c++17 hello.cpp -DWITH_COMPILE_ERROR 2>&1) if [[ $compile_output == *"error: cannot assign to return value because function 'operator[]' returns"* ]]; then echo "Still fails with compile error" exit 0 fi # otherwise, the reduction is not interesting exit 1 |

Let's break this down a bit:

- With the first

grepcommand, we want to make sure the#ifdefblock is retained in the code. This is not required to reproduce the error. But you can see how one can retain some of the key aspects of the code using a simple grep command. If grep fails to find the specified string, then we are exiting the script with theexit 1. This way, we tell C-Reduce that the generated code is not interesting to us and should be discarded. - Then, in the second step, we ensure that the produced code compiles successfully with clang++. You might question if this step is necessary. The reason I retained this is because C-Reduce might generate code with additional syntax or other types of errors. With this compilation command, I am verifying that the code compiles correctly without the

-DWITH_COMPILE_ERROR. - Finally the main step where we verify that we are still encountering the expected error. We compile the code with

-DWITH_COMPILE_ERRORand confirm that the errorcannot assign to return value because function 'operator[]' returnsis present in the compiler output. If this error is obtained, then the test is deemed interesting for us. By exiting with an exit code of 0, we communicate to C-Reduce that the generated code is of interest and should be considered for further reduction steps.

Straightforward, right? Let's now run the C-Reduce to perform the actual reductions:

|

1 2 3 4 5 6 7 |

# make sure the interestingness test has an execute permission chmod +x interestingness.sh # launch creduce $ creduce interestingness.sh hello.cpp |

This will start output like below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

... running 3 interestingness tests in parallel ===< pass_unifdef :: 0 >=== ===< pass_comments :: 0 >=== ===< pass_ifs :: 0 >=== ===< pass_includes :: 0 >=== (2.4 %, 860 bytes) timestamp 3 size 860 (4.7 %, 840 bytes) timestamp 4 size 840 ===< pass_line_markers :: 0 >=== ... ===< pass_lines :: 10 >=== (58.5 %, 366 bytes) timestamp 58 size 366 ===< pass_clang_binsrch :: replace-function-def-with-decl >=== ===< pass_clang_binsrch :: remove-unused-function >=== ... ===< pass_indent :: final >=== (88.3 %, 103 bytes) timestamp 335 size 103 ===================== done ==================== pass statistics: method pass_balanced :: angles worked 1 times and failed 2 times method pass_clex :: rm-tok-pattern-4 worked 1 times and failed 512 times method pass_blank :: 0 worked 1 times and failed 1 times ... |

We don't necessarily need to fully comprehend the details of what C-Reduce is printing. In essence, C-Reduce provides information about the progress of the reduction process. This includes details about the various reduction passes applied to the code, a percentage indicating how much the code size has been reduced, the current size of the code in bytes, statistics about the success or failure of passes throughout the reduction process, and finally, the end result or the reduced code after applying all the passes. The original input hello.cpp is backed up as hello.cpp.orig, and the resulting hello.cpp is updated with the code below:

|

1 2 3 4 5 6 7 8 9 |

#include <array> void a() { constexpr std::array b{3}; #ifdef WITH_COMPILE_ERROR b[0] = 7 #endif } |

This is precisely what one would desire, isn't it? Just to have an idea, the above example ran for ~2 minutes on my quad-core Intel Core i5 CPU.

C-Reduce In Action: Runtime Behaviour / Errors

Let's assume we are not dealing with compilation errors but a particular runtime behavior, and our goal is to generate a minimal code that exhibits this specific runtime behavior. While it might not showcase much with our Hello World example, let's consider the runtime behavior to be the output Hello World!. We would like to produce a minimal application code that generates this output and eliminate everything else. For this, our interestingness test would look like:

|

1 2 3 4 |

#!/bin/bash g++ -std=c++17 hello.cpp -o hello && ./hello | grep -q "Hello World" |

And we can launch C-Reduce as:

|

1 2 3 4 5 |

$ chmod +x interestingness.sh $ creduce ./interestingness.sh hello.cpp |

After a few minutes, the hello.cpp is updated with the below code:

|

1 2 3 4 |

#include <iostream> int main() { std::cout << "Hello World!"; } |

Awesome, isn't it?

When I first explored C-Reduce, my impression was that its application was predominantly geared toward addressing compilation errors. However, as illustrated in this example, C-Reduce showcases its versatility to accommodate various reduction tasks, not necessarily confined to compiler bugs.

Real World Example

Now, let's delve into a real-world example from a production application. During the process of porting the NEURON simulator to GPUs using the OpenMP/OpenACC programming model, I encountered the following compiler bug. The code compiles without issues for the CPU (without the -mp=gpu flag), but it triggers a compiler crash when compiling for the GPU target:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

$ nvc++ -O2 --c++17 -c cacumm.cpp // compiles fine $ nvc++ -O2 --c++17 -mp=gpu -c cacumm.cpp // crashes compiler ... ^ 1 catastrophic error detected in the compilation of "cacumm.cpp". Compilation aborted. nvc++-Fatal-/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/bin/tools/cpp1 TERMINATED by signal 6 Arguments to /opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/bin/tools/cpp1 /opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/bin/tools/cpp1 --llalign -Dunix -D__unix -D__unix__ -Dlinux -D__linux -D__linux__ -D__NO_MATH_INLINES -D__LP64__ -D__x86_64 -D__x86_64__ -D__LONG_MAX__=9223372036854775807L '-D__SIZE_TYPE__=unsigned long int' '-D__PTRDIFF_TYPE__=long int' -D__amd64 -D__amd64__ -D__k8 -D__k8__ -D__MMX__ -D__SSE__ -D__SSE2__ -D__SSE3__ -D__SSSE3__ -D__ABM__ -D__SSE4_1__ -D__SSE4_2__ -D__AVX__ -D__AVX2__ -D__F16C__ -D__FMA__ -D__XSAVE__ -D__XSAVEOPT__ -D__XSAVEC__ -D__XSAVES__ -D__POPCNT__ -D__SHA__ -D__AES__ -D__PCLMUL__ -D__CLFLUSHOPT__ -D__FSGSBASE__ -D__RDRND__ -D__BMI__ -D__BMI2__ -D__LZCNT__ -D__FXSR__ -D__PKU__ -D__GFNI__ -D__VAES__ -D__VPCLMULQDQ__ -D__MOVDIRI__ -D__MOVDIR64B__ -D__PGI -D__NVCOMPILER -D_GNU_SOURCE -D_PGCG_SOURCE --c++17 -I- -I/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/extras/qd/include/qd -I/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/comm_libs/nvshmem/include -I/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/comm_libs/nccl/include -I/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/comm_libs/mpi/include -I/opt/nvidia/hpc_sdk/Linux_x86_64/23.1/math_libs/include --sys_include /opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/include --sys_include /opt/nvidia/hpc_sdk/Linux_x86_64/23.1/compilers/include-stdexec --sys_include /opt/nvidia/hpc_sdk/Linux_x86_64/23.1/cuda/12.0/include --sys_include /usr/include/c++/11 --sys_include /usr/include/x86_64-linux-gnu/c++/11 --sys_include /usr/include/c++/11/backward --sys_include /usr/lib/gcc/x86_64-linux-gnu/11/include --sys_include /usr/local/include --sys_include /usr/include/x86_64-linux-gnu --sys_include /usr/include -D__PGLLVM__ -D__NVCOMPILER_LLVM__ -D__extension__= -D_OPENMP=202011 -DCUDA_VERSION=12000 -DPGI_TESLA_TARGET -D_PGI_HX -DCUDA_FORCE_CDP1_IF_SUPPORTED --preinclude _cplus_preinclude.h --preinclude_macros _cplus_macros.h --gnu_version=110400 -D__pgnu_vsn=110400 --no_fixed_bp --target_gpu --mp -D_OPENMP=202011 -D_NVHPC_RDC -q -o /tmp/nvc++WvLhqK6zkNYr.il cacumm.cpp |

In our case, the source code of the input file cacumm.cpp is generated from NMODL DSL using the NMODL Compiler Framework, a project we are actively developing. While the input file is relatively small, around ~400 lines of C++ code, there are a few key points to note:

- It incorporates the Eigen library within offload regions. We faced difficulties using Eigen in OpenMP/Offload regions, and it wasn't immediately clear whether this was an issue with the Eigen library, our code, or a potential NVHPC compiler bug.

- The code includes various NEURON and Eigen include files, resulting in a preprocessed code of approximately 185k lines. While the size may not be a critical concern, especially for internal use, providing a concise and understandable reproducer becomes crucial when creating a reproducer for submission. Additionally, when dealing with proprietary codes, we want to provide a smaller code without revealing proprietary implementation details. From the perspective of compiler developers, a concise and easily comprehensible reproducer saves time.

When I first submitted the bug report on the NVIDIA forum in September 2022 here, I wasn't well-acquainted with C-Reduce. Therefore, I took a manual approach, systematically removing or commenting out parts of the code that seemed unrelated to check if the error persisted. While this method wasn't overly laborious, it might be time-consuming/boring/frustrating depending on the familiarity or the complexity of the auto-generated DSL code. If you look at the reproducer I submitted, it was quite succinct. Let's now see if and how C-Reduce can help in this case.

If you want to play with this example, you need to make sure to install NVHPC compiler < 23.3. Then, download the preprocessed code that I have provided as GitHub gist:

|

1 2 3 |

wget https://gist.githubusercontent.com/pramodk/70f89e2790cc240d4a4e9850059c3c1d/raw/ae525ac3f5059c7c9a0d517f9f4254e105266443/cacum.cpp |

As you might imagine, different people may compose interestingness test in different ways. Here is what I came up with:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

#!/bin/bash # Make sure compilation for CPU is OK nvc++ -mp -g -O2 --c++17 -c cacum.cpp >/dev/null 2>&1 \ || { echo "Doesn't compile for CPU!"; exit 1; } # See if GPU compilation produces the desired error compile_output=$(nvc++ -g -O2 --c++17 -mp=gpu -c cacum.cpp 2>&1) if [[ $compile_output == *"internal error: assertion failed: lower_expr"* && \ $compile_output == *"TERMINATED by signal 6"* ]]; then echo "Fails for GPU!" exit 0 else exit 1 fi |

If you are following this example after the one from the previous Basic Usage section this should be straightforward:

- In the first compilation command, we make sure the reduced code is still valid and compiles for CPU.

- In the second compilation command we compile code with

-mp=gpuflag for GPU offload and capture the stdout/stderr. We check if the output contains our desired compilation error.

Now let's launch C-Reduce like before:

|

1 2 3 4 5 6 |

chmod +x interestingness.sh module load nvhpc/23.1 creduce interestingness.sh cacum.cpp |

And the C-Reduce update cacum.cpp with the following code:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

struct a { int compute_gpu; }; int b; void c() { a *nt; _Pragma("omp target teams distribute parallel for if(nt->compute_gpu)") for ( int d = 0; d < b; d++) struct e { void f() {} e(void); }; } |

When I looked at the above and compared it with my "manually crafted" reproducer, I was genuinely impressed and convinced that C-Reduce excels in its job!

Interesting Insights

Beyond a basic usage of C-Reduce (which is covered in other tutorials and blog posts), here is a curated list of topics or questions that I have gathered and find interesting, and I thought it's worth mentioning here. I don't think it is necessary to provide detailed examples for everything, as the post has already grown larger than my initial plan!

Q1. How to use C-Reduce for debugging runtime issues in large, complex applications?

If a compiler bug is causing compilation errors, it's clear how to use C-Reduce— a similar pattern to what we have discussed earlier. But how do you handle compiler bugs that generate buggy assembly code and result in runtime failures? In such cases, it's not as straightforward as running the compilation command and capturing compiler output to search for an error. Often, the application involves intricate builds and runtime inputs, and there isn't a one-size-fits-all strategy. Here are some thoughts/strategies that I've come up with when debugging our own examples:

-

Separate the code logically into sections that are meant for C-Reduce reduction and others that may be bug-free or uninteresting. This way, the interestingness test can focus specifically on the logically separated code.

-

In cases where application builds are complex and time-consuming, it is advisable not to include the entire build step as part of the interestingness test. Instead, explore the possibility of building a separate shared library from a logically separated code segment. This approach allows us to run C-Reduce on a smaller code segment, create a shared library, and execute the application to check for the persistence of the bug.

For the sake of illustration, let's assume our "Hello World" example is divided into separate files, resulting in a structure like the one below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

#include <iostream> #include <array> #include "array.h" #include "hello.h" int main() { sayHello(); constexpr std::array<int, 3> vector1 = {1, 2, 3}; constexpr std::array<int, 3> vector2 = {4, 5, 6}; std::array<int, 3> result = addArrays(vector1, vector2); printArray(result); return 0; } |

and then we compile this application into multiple shared libraries:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

# Compile array.cpp into a shared library g++ -std=c++17 -c -fPIC array.cpp -o array.o g++ -shared -o libarray.so array.o # Compile hello.cpp into a shared library g++ -std=c++17 -c -fPIC hello.cpp -o hello.o g++ -shared -o libhello.so hello.o # Compile main.cpp linking with both shared libraries g++ -std=c++17 main.cpp -L. -larray -lhello -o program |

Now, assuming we are only interested in the "Hello World" output and do not want to modify libarray.so and libhello.so, our interestingness test could look like the following, where we want to manipulate only main.cpp:

|

1 2 3 4 5 6 7 8 |

#!/bin/bash # TODO: set LD_LIBRARY_PATH or add rpath as C-Reduce expects the interestingness test to be relocatable clang++ -std=c++17 main.cpp -o program -larray -lhello || exit 1 ./hello | grep -q "Hello World" |

We can now launch creduce as usual:

|

1 2 3 |

creduce --timing interestingness.sh main.cpp |

After a few minutes, the reduced main.cpp from C-Reduce looks like the following:

|

1 2 3 4 |

#include "hello.h" int main() { sayHello(); } |

Note that the prototype of "sayHello()" is determined by libhello.so (from hello.cpp), and it's outside the scope of the C-Reduce reduction process. Hence, this is an expected output.

This is just one example of how you can think about structuring your application. Depending on your application and the issue you are debugging, you can come up with other strategies.

Q2. What's the difference between CVise and C-Reduce?

I learned about C-Reduce first and later came across C-Vise. As mentioned earlier, C-Reduce was developed by John Regehr and his group at the University of Utah. It includes the clang_delta tool built on top of the Clang infrastructure to perform source-to-source transformations. The top, user-facing layer of C-Reduce is written in Perl.

Martin Liška, an ex-GCC maintainer, developed C-Vise while working at SUSE. It's not an entirely new tool but a Python port of the C-Reduce. At its core, it still uses the same clang_delta tool from the C-Reduce framework, and hence the core source-to-source transformations are shared with C-Reduce. But the top, user-facing layer is written in Python. It is supposed to be more parallel/faster compared to C-Reduce (more about this in the next question). Additionally, Martin has done excellent work in areas like 1) porting and maintaining the tool for multiple platforms 2) proactively fixing the compatibilities with the LLVM releases (which C-Reduce often lacks) 3) helping users get the best out of C-Vise/C-Reduce.

At a high level, both C-Vise and C-Reduce tools do the same thing: program reduction using the clang_delta tool. As C-Vise is compatible with C-Reduce, one can easily replace one with another. You will achieve the same thing!

Q3. Can I use C-Reduce (or C-Vise) in parallel? Or, Why these tools don't work faster using multiple cores?

Let's address the easier part first: both C-Reduce and C-Vise provide a CLI option, --n, to specify the number of cores used for code reduction. If the --n CLI parameter is not specified, C-Vise attempts to utilize all available physical cores, while C-Reduce is conservative and defaults to a maximum of 4 physical cores. To run more cores with C-Reduce, you must explicitly use the --n CLI option. For example, on an Intel Core i9-12900K Processor, C-Reduce starts 4 parallel tests, while C-Vise starts 16 parallel tests.

Now, moving on to the more complex aspect: If I find my reductions slow and time-consuming, can I accelerate the process by increasing the number of cores? Unfortunately, this is not always the case. A bit of background: There were instances where C-Reduce did not exhibit faster performance even with a substantial number of CPU cores (up to 40 cores). This is when I discovered C-Vise and anticipated it to have superior parallelization capabilities compared to C-Reduce. However, both tools did not yield significant improvements when the number of physical cores was increased, even up to 40. I was uncertain whether this was a configuration issue or some implementation details.

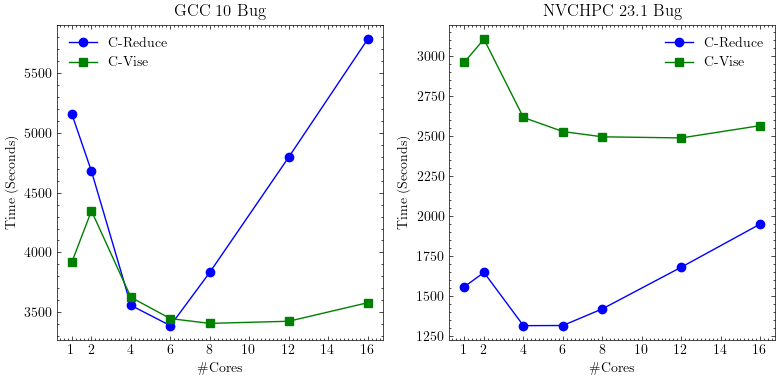

A few weeks ago I started looking at this "scaling" issue systematically and provided my findings in this GitHub issue cvise/issues/123. Here is the comparison of C-Vise and C-Reduce to reduce two bugs: one reported here with GCC v10 and another one with NVHPC (see previous section). I compared the reduction times of the two tools using up to 16 cores and the comparison looked like below:

I didn't plot error bars, etc., but ran the experiments a few times to ensure the trend is similar. In summary:

- For the GCC 10 bug, C-Vise performed better with a single core, but C-Reduce provided the best reduction time with #cores = 6. However, with a higher number of cores, C-Reduce's performance significantly degraded compared to C-Vise.

- For the NVHPC 23 bug, C-Reduce consistently performed better (up to 1.9x) compared to C-Vise.

I lack sufficient internal implementation knowledge of these tools and am not qualified to explain this behavior in detail. However, if I understand the gist correctly, the reduction time using multiple cores is constrained by the algorithm/parallelization strategy used underneath. John Regehr's blog post has a section about Parallel Test-Case Reduction in C-Reduce stating the following:

C-Reduce’s second research contribution is to perform test-case reduction in parallel using multiple cores. The parallelization strategy, based on the observation that most variants are uninteresting, is to speculate along the uninteresting branch of the search tree. Whenever C-Reduce discovers an interesting variant, all outstanding interestingness tests are killed and a new line of speculation is launched.

C-Reduce has a policy choice between taking the first interesting variant returned by any CPU, which provides a bit of extra speedup but makes parallel reduction non-deterministic, or only taking an interesting variant when all interestingness tests earlier in the search tree have reported that their variants are uninteresting. We tested both alternatives and found that the speedup due to non-deterministic choice of variants was minor. Therefore, C-Reduce currently employs the second option, which always follows the same path through the search tree that non-parallel C-Reduce would take. The observed speedup due to parallel C-Reduce is variable, and is highest when the interestingness test is relatively slow. Speedups of 2-3x vs. sequential reduction are common in practice.

Also, you can see the discussions about the reduction time speedups in C-Vise issues #41 and #114. I am still understanding/discussing this issue here and I am grateful for Martin taking the time to explain the behavior.

Q4. Can we use C-Reduce for non-C/C++ applications? How?

C-Reduce incorporates C/C++ language-specific passes (based on Clang) to efficiently reduce C/C++ codes. However, as evident from the discussion in this GitHub issue regarding Haskell support, C-Reduce has been successfully employed with other languages, including Java, Rust, Julia, Haskell, Racket, and Python.

Let's see this in practice with the same "hello-world" example converted to Python:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

from typing import List def add_arrays(arr1: List[int], arr2: List[int]) -> List[int]: return [sum(x) for x in zip(arr1, arr2)] def print_array(arr: List[int]) -> None: print("Result:", *arr) def say_hello() -> None: print("Hello World!") def main() -> None: say_hello() vector1 = [1, 2, 3] vector2 = [4, 5, 6] result = add_arrays(vector1, vector2) print_array(result) if __name__ == "__main__": main() |

Our interestingness test looks like this:

|

1 2 3 4 |

#!/bin/bash python3 hello.py | grep -q "Hello World!" |

And let's we launch C-Reduce as:

|

1 2 3 |

creduce --not-c interestingness.sh hello.py |

And after a few minutes, the generated hello.py is:

|

1 2 3 |

print("Hello World!") |

Voila! Did C-Reduce disappoint us? Not at all!

In the previously linked blog post, John explained why C-Reduce is also successful in non-C/C++ languages: the programming languages in the Algol and Lisp families have common structural elements. The C-Reduce core is completely domain-independent, and the Delta debugging techniques are also effective for many other programming languages.

It's worth noting that both C-Reduce and C-Vise offer a --not-c CLI option to skip the transformation passes related to C/C++ languages. Although these passes fail very fast, they don't significantly impact the performance or effectiveness of the reduction process.

Q5. What if reduced programs are taking too long? Or, a reduced program might be hanging?

When dealing with reduced programs that take too long or might hang, C-Reduce provides options to handle such situations. By default, C-Reduce waits for 300 seconds to finish the interestingness test. If the test exceeds this time frame, the reduction is discarded.

As an application developer, you may have better insights into what an appropriate default timeout should be for your specific case. You can adjust this timeout using the --timeout CLI option. Alternatively, you can design the interestingness test using commands like ulimit:

|

1 2 3 4 5 6 7 8 |

#!/bin/bash ulimit -t 10 compile_output=$(nvc++ -g -O2 --c++17 -mp=gpu -c cacum.cpp 2>&1) ....rest of the commands... |

The use of ulimit -t 10 ensures that all commands or sub-processes executed by the script, taking more than 10 seconds of compute time, will be automatically terminated. This setting will also result in a non-zero return code, causing the interestingness test to fail.

Q6. Can I use multiple files for reduction with C-Reduce?

While many examples primarily use a single source file for reduction, it's important to note that C-Reduce supports reductions involving more than one source code file, commonly referred to as multi-file reduction. For instance, the Hello World example split across multiple source files can also be executed as:

|

1 2 3 |

creduce interestingness.sh array.cpp array.h hello.cpp hello.h main.cpp |

where the interestingness.sh script looks like:

|

1 2 3 4 5 |

#!/bin/bash g++ -std=c++17 main.cpp hello.cpp array.cpp -o hello || exit 1 ./hello | grep -q "Hello World" |

When multiple files are provided for reduction, C-Reduce processes attempt to reduce them by considering the interaction between the files. In our case, the above command produced the following output:

|

1 2 3 4 5 6 7 8 9 10 11 |

$ cat main.cpp void sayHello(); int main() { sayHello(); } $ cat hello.cpp #include <iostream> void sayHello() { std::cout << "Hello World"; } |

Note that array.cpp is eliminated in this case.

Q7. Do I need to preprocess the input source code?

No, it's not a requirement to preprocess the input code. The rationale behind using preprocessed input code for reduction is to generate a test code that can effectively reproduce a bug in isolation, without relying on external code or header files. This becomes particularly important when sharing the reproducer with compiler engineers and support teams.

If, for some reason, reducing preprocessed code is not possible, one can opt to reduce an original, non-preprocessed source file. In such cases, it's necessary to set the CREDUCE_INCLUDE_PATH environment variable to a colon-separated list of include directories. This ensures that clang_delta can locate the necessary include files. Alternatively, the approach of multi-file reduction on the file and its transitive includes can also be employed.

Q8. More tips for writing better interestingness tests?

Not much I can think of for now. However, since the C-Reduce website hasn't been updated, and the master branch isn't aligned with the upcoming release branch (2.11), I'd like to directly copy some insightful suggestions from the original authors (I hope that's okay!):

- If C-Reduce gives you crazy, undesirable results, you probably need to refine your interestingness test.

- C-Reduce will work poorly if the interestingness test is

non-deterministic. You'll need to turn off ASLR if the compiler bug you are dealing with might involve a

memory safety violation. - If the interestingness test runs compiled code, this must be done under a time limit (and perhaps also under a memory limit.

- The interestingness test should run cheap parts of its test first. In particular, the very first thing any test should do is try to exit as rapidly as possible upon detecting a syntactically invalid program.

- The interestingness test can filter out some kinds of undesirable variants by inspecting compiler warnings.

- Use a heavyweight undefined behavior checker to filter out undesirable

variants if compiler warnings aren't doing the job.

Some of these suggestions are quite useful in the context of HPC development. For example, when working with OpenACC/OpenMP applications, if the compilation times for GPU are notably higher than CPU compilation, the interestingness test can utilize CPU-based checks to swiftly exclude uninteresting variants. The heavier parts of the interestingness test can then be executed later.

Q9. What are the most interesting options for C-Reduce?

Here are the CLI options that I find most relevant for (my) common usage:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 |

$ creduce --help ... Summary of options: --add-pass <pass> <sub-pass> <priority> Add the specified pass to the schedule --also-interesting <exitcode> A process exit code (somewhere in the range 64-113 would be usual) that, when returned by the interestingness test, will cause C-Reduce to save a copy of the variant [default: -1] --n <N> Number of cores to use; C-Reduce tries to automatically pick a good setting but its choice may be too low or high for your situation --no-default-passes Start with an empty pass schedule --no-give-up Don't give up on a pass that hasn't made progress for 50000 iterations --no-kill Wait for parallel instances to terminate on their own instead of killing them (only useful for debugging) --not-c Don't run passes that are specific to C and C++, use this mode for reducing other languages --print-diff Show changes made by transformations, for debugging --remove-pass <pass> [sub-pass] Remove all instances of the specified pass from the schedule. --save-temps Don't delete /tmp/creduce-xxxxxx directories on termination --skip-initial-passes Skip initial passes (useful if input is already partially reduced) --sllooww Try harder to reduce, but perhaps take a long time to do so --timeout Interestingness test timeout in seconds [default: 300] usage: creduce [options] interestingness_test file_to_reduce [optionally, more files to reduce] creduce --help for more information |

Closing Thoughts

When I first encountered C-Reduce, my initial impression was, "It's a cool tool for streamlining compiler bug reduction. How can this be beneficial for an application developer like me?" I must admit, I was mistaken. The more I delved into it and observed its application by others, the more I grasped its strength. For those prone to encountering compiler bugs, I strongly recommend becoming acquainted with the C-Reduce tool. I am confident it will prove to be a time-saver, whether or not you decide to submit bug reports to compiler developers. If you choose to do so, your reproducers will capture attention and garner faster feedback!

In the field of HPC, we consistently leverage the latest and most advanced compiler toolchains with support for evolving programming models. Consequently, encountering compiler bugs is not uncommon. Reflecting on my 12 years of work, I find it somewhat surprising that I didn't come across advice such as, "Hey, if you encounter this bug and reproducer creation is challenging, give C-Reduce a try." Additionally, this tool wasn't mentioned by compiler support teams or in various tutorials focused on application porting. So, I have two concrete suggestions if I may 🙂 :

-

To the compiler vendors: When shipping compiler toolchains, it would be beneficial to consider bundling or, at the very least, advertising a tool like C-Reduce or C-Vise. Instead of a generic "segmentation fault" error, providing guidance to the documentation of tools like C-Reduce or C-Vise could be more helpful. Moreover, what if compilers start supporting CLI options like "--creduce" to initiate the reduction process? (for certain types of issues). There are details involved but I am sure this could prove to be a win-win for both end users and compiler developers.

-

To the HPC support staff: When instructing domain scientists on porting complex scientific applications, it would be highly beneficial to introduce tools like C-Reduce. Making these tools easily accessible, such as through modules, and incorporating them into documentation can enhance their visibility and utility for users.

In the PLDI'2012 paper, the authors of C-Reduce made the following statement:

Our belief is that many compiler bugs go unreported due to the high difficulty of isolating them. When confronted with a compiler bug, a reasonable compiler user might easily decide that the most economic course is to find a workaround, and not to be “sidetracked” by the significant time and effort required to produce a small test case and report the bug.

Agreed, even as a non-compiler developer, I can attest to this firsthand! The more we make the process and tools like C-Reduce accessible and efficient, the more we will attract end-users and application developers to report compiler bugs.

References

Here are some useful references to learn more about the C-Reduce:

- A relatively recent two parts blog series by Prof. John Regehr gives quite good insights about the various aspects of the C-Reduce: Part 1 and Part 2

- PLDI'2012 paper: Test-case reduction for C compiler bugs

- Code: See GitHub repositorycsmith-project/creduce

- Documentation: The README in the GitHub repository is dated and I have opened a GitHub issue #268. Until the website or GitHub repo is updated, you have a few options:

- Web archived copy of the previous website

- the README from the upcoming 2.11 release

- Other blogs:

- C-Reduce Dev Mailing List

Credits

First and foremost, credit for developing this excellent tool and information in this blog post goes to the main developers of C-Reduce and clang_delta: Prof. John Regehr and his group at the University of Utah. Special thanks are also due to Martin Liška, who has been maintaining the C-Vise tool and providing support to users in various ways. Finally, I want to express my gratitude to Olli Lupton, my former colleague at the Blue Brain, who introduced many of us to C-Reduce and demonstrated its effectiveness. He shared numerous insights and examples from his usage. Thanks to him, I started exploring C-Reduce and gained valuable insights for its effective usage!